要約

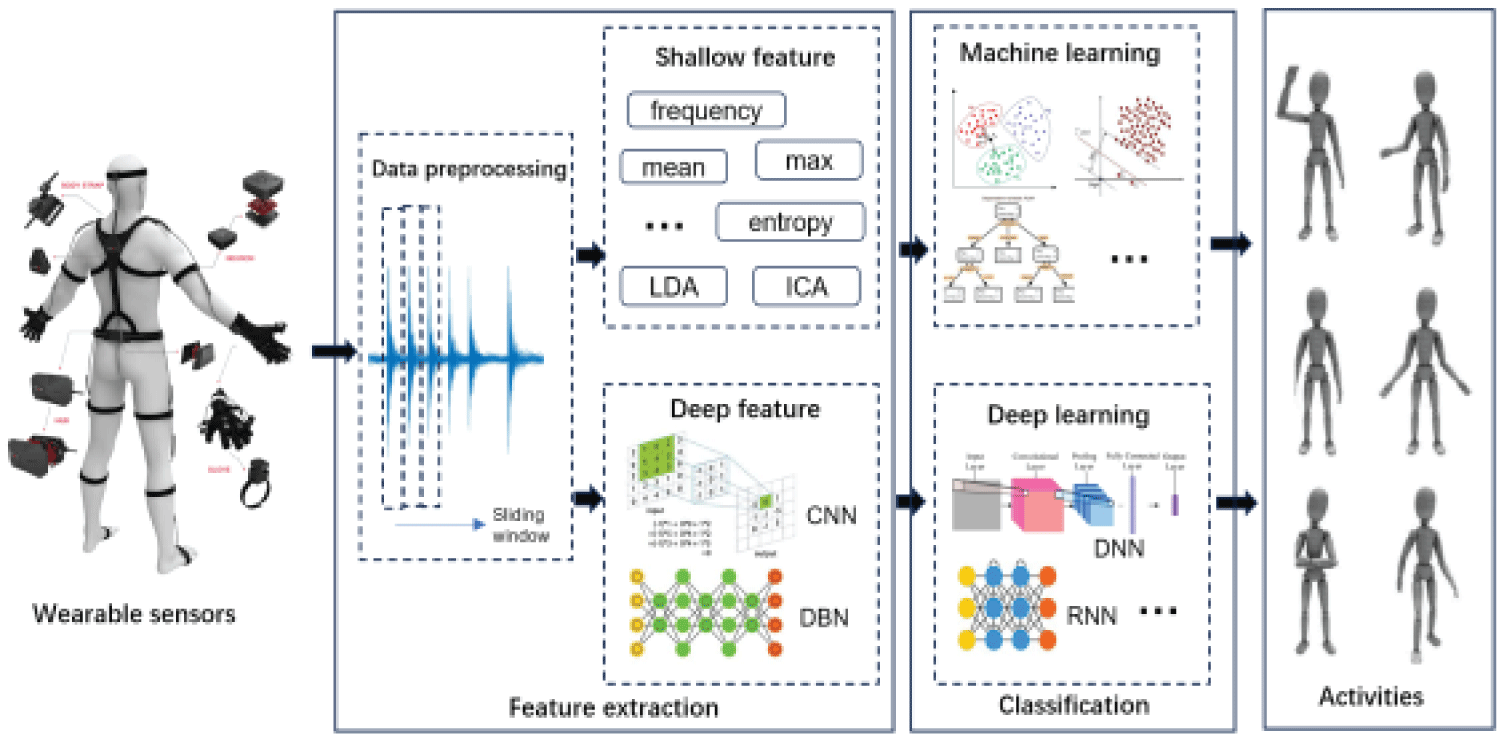

The rapid development of wearable technology provides new opportunities for action data processing and classification techniques. Wearable sensors can monitor the physiological and motion signals of the human body in real-time, providing rich data sources for health monitoring, sports analysis, and human-computer interaction. This paper provides a comprehensive review of motion data processing and classification techniques based on wearable sensors, mainly including feature extraction techniques, classification techniques, and future development and challenges. First, this paper introduces the research background of wearable sensors, emphasizing their important applications in health monitoring, sports analysis, and human-computer interaction. Then, it elaborates on the work content of action data processing and classification techniques, including feature extraction, model construction, and activity recognition. In feature extraction techniques, this paper focuses on the content of shallow feature extraction and deep feature extraction; in classification techniques, it mainly studies traditional machine learning models and deep learning models. Finally, this paper points out the current challenges and prospects for future research directions. Through in-depth discussions of feature extraction techniques and classification techniques for sensor time series data in wearable technology, this paper helps promote the application and development of wearable technology in health monitoring, sports analysis, and human-computer interaction.

Index Terms: Activity recognition, Wearable sensor, Feature extraction, Classification